Summary

Connect after the event: Fill this form. For questions or adding to the list, email passant.elagroudt@gmail.com

Attendance mode: CHI’24 in-person only

Date: Tue May 14th (11:00 - 12:20) (CHI program link)

Location: Hawaii Convention Center, Room 318A

Full Proposal: [CHI24-SIG] GenAI_for_HCI

Registration: No registration is required.

Publicity: By attending the event, you consent to the capturing and sharing photos and videos taken during the event, both online and offline. The content is shared through Humane AI social media accounts (Linkedin, Facebook, X)

SIG Scope and Goal

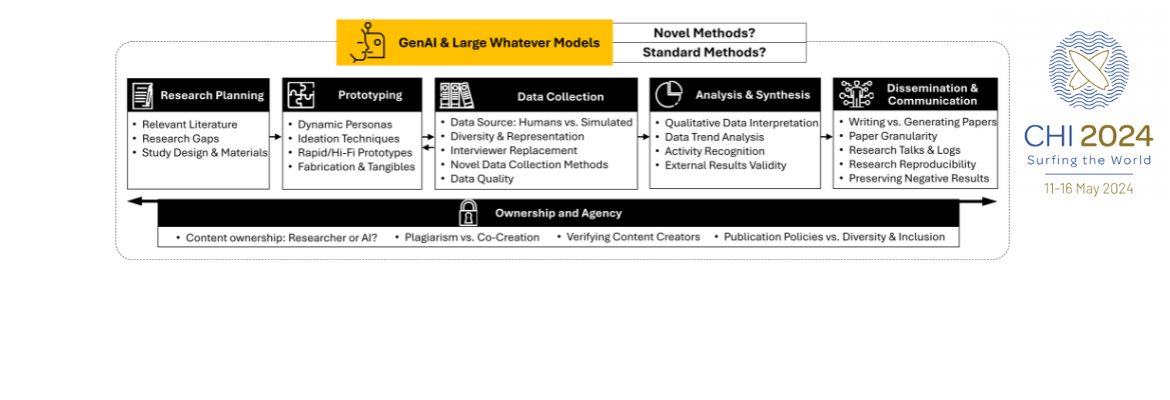

This Special Interest Group (SIG) explores the transformative impact of Generative Artificial Intelligence (GenAI) on Human-Computer Interaction (HCI) research processes. The theme here is to answer “question zero”: when to use and when to refrain from using AI tools during the research cycle? The discussion is guided by five research phases commonly used in HCI: research planning, prototyping, data collection, analysis and synthesis, and dissemination and communication.

We investigate how GenAI accelerates project cycles, enhances reproducibility, and influences inclusivity in research. We also address the challenging ethical considerations about the ownership of generated content. We aim to build a community of HCI enthusiasts to harness the early advantages of the recent groundbreaking technology and foresee challenges arising from its prevalence in the scientific community.

Organizing Team

- Passant Elagroudy, Postdoc @ German Research Center for Artificial Intelligence (DFKI), Germany

- Jie Li, Regional Research Head @ EPAM, Netherlands

- Kaisa Väänänen, Professor @ Tampere University, Finland

- Paul Lukowicz, Professor @ German Research Center for Artificial Intelligence (DFKI), Germany

- Hiroshi Ishii, Professor @ MIT Media Lab, USA

- Wendy E. Mackay, Professor @ ExSitu, Inria,LISN, Université Paris-Saclay, France

- Elizabeth F Churchill, Senior Director @ Google LLC, USA

- Anicia Peters, CEO @ National Commission for Research, Science and Technology, Namibia

- Antti Oulasvirta, Professor @ Aalto University, Finland

- Rui Prada, Professor @ INESC-ID,Instituto Superior Técnico, Universidade de Lisboa, Portugal

- Alexandra Diening, Research Head @ EPAM, Netherlands

- Giulia Barbareschi, Senior Assistant Professor @ Keio Graduate School of Media Design, Keio University, Japan

- Agnes Gruenerbl, Research Manager @ German Research Center for Artificial Intelligence (DFKI), Germany

- Midori Kawaguchi, Assistant Professor @ Keio University Graduate School of Media Design, Japan

- Abdallah El Ali, Research Scientist @ Centrum Wiskunde & Informatica (CWI), Netherlands

- Fiona Draxler, Postdoc @ University of Mannheim, Germany

- Robin Welsch, Assistant Professor @ Aalto University, Finland

- Albrecht Schmidt, Professor @ LMU Munich, Germany

What Should You Expect?

Please note that this is a tentative schedule.

- Short introduction about our motivation and background.

- Panel-like statements from the organizers about how GenAI will change the way we do research in the fields of:

- Interaction design

- Robotics

- Haptics and Tangibles

- User research in the industry

- Ethics

- Group activity: re-imagining one of CHI’s best papers using GenAI from idea conception to publication.

- Collection of useful resources

Supporting Research Networks

This work is partially supported and funded by the following entities: the HumanE AI Network under the European Union’s Horizon 2020 ICT programme (grant agreement no. 952026), national funds through FCT, Fundação para a Ciência e a Tecnologia, under project UIDB/50021/2020 (DOI:10.54499/UIDB/50021/2020), and the ERC European Research Council Advanced grant No 321135 (CREATIV: Creating Co-Adaptive Human-Computer Partnerships)

Resources

Please note that this list is not meant to be exhaustive but rather a starting point for interested individuals. We welcome contributions by others. Please email <passant.elagroudy[at]gmail.com> if you would like to add content. Please also note that this list is not intended to endorse particular solutions.

Relevant Social Media Accounts and Organizations

- Razia Aliani : Linkedin account about using AI commercial tools for research

- Sowmiya Rani Ph.D.: Linkedin account about using AI commercial tools for scientific writing

- Muhammad Irfan 🧬: Linkedin account about using AI commercial tools for scientific writing

- Stefan Harrer, PhD: Linkedin account about AI research changing the way we do science.

- Asad Naveed: Linkedin account about general productivity advice for research & using GenAI tools.

- Frauke Kreuter: Linkedin account for a professor discussing quantitative research methods using AI among other things.

- AI4Science: microsoft research to empower the 5th research paradigm

- The German group for digitizing research

Relevant Contributions @CHI’24

- CHI'24 Workshop: Workshop on LLMs as Research Tools: Applications and Evaluations in HCI Data Work (by Marianne Aubin Le Quere, Hope Schroeder, Casey Randazzo, Jie Gao, Ziv Epstein, Simon Tangi Perrault, David Mimno, Louise Barkhuus, Hanlin Li)

- CHI'24 Workshop: Workshop on GenAICHI 2024 Workshop: Generative AI and HCI at CHI 2024 (by Michael Muller, Anna Kantosalo, Mary Lou Maher, Charles Patrick Martin, Greg Walsh)

- CHI'24 Workshop: Workshop on Challenges and Opportunities of LLM-Based Synthetic Personae and Data in HCI (by Mirjana Prpa, Giovanni M Troiano, Matthew Wood, Yvonne Coady)

- CHI'24 Workshop: Workshop on Forms of Fraudulence in Human-Centered Design: Collective Strategies and Future Agenda for Qualitative HCI Research (by Aswati Panicker, Novia Nurain, Zaidat Ibrahim, Chun-Han Ariel Wang, Seung Wan Ha, Elizabeth Kaziunas, Maria K Wolters, Chia-Fang Chung)

- CHI'24 Workshop: Workshop on Dark Sides: Envisioning, Understanding, and Preventing Harmful Effects of Writing Assistants � The Third Workshop on Intelligent and Interactive Writing Assistants (by Minsuk Chang, John Joon Young Chung, Katy Ilonka Gero, Ting-Hao Kenneth Huang, Dongyeop Kang, Vipul Raheja, Sarah Sterman, Thiemo Wambsganss)

- CHI'24 Workshop: Workshop on Human Centered Evaluation and Auditing of Large Language Models (by Ziang Xiao, Wesley Hanwen Deng, Michelle S. Lam, Motahhare Eslami, Juho Kim, Mina Lee, Q. Vera Liao)

Policy Documents

- European Commission guidelines for using GenAI in scientific funding

- Elsivier’s policy for using GenAI in authoring papers

- ACM policy for using GenAI in authoring papers

- CHI policy for using GenAI in authoring papers

“Text generated from a large-scale language model (LLM) such as ChatGPT must be clearly marked where such tools are used for purposes beyond editing the author’s own text. While we will not be using tools to detect LLM-generated text, we will investigate submissions brought to our attention and will desk reject papers where LLM use is not clearly marked.”

Miscellaneous

- [Interactions article] Simulating the Human in HCD with ChatGPT: Redesigning Interaction Design with AI (by Albrecht Schmidt, Passant Elagroudy, Fiona Drexler, Frauke Kreuter, and Robin Welsch)

- [Startup Hackathon] LWM Hackathon: Enhancing Research Productivity (by Siobhan O'Neill, Anastasiya Zakreuskaya, Joanna Sorysz, Stefan Gerd Fritsch, Mohamed Selim, Marco Hirsch, Sebastian Vollmer, Georgios Spathoulas, Passant Elagroudy, and Paul Lukowicz) (website) (summary linkedin post) (winners)

Relevant Commercial Tools

- Check the list curated by Albrecht Schmidt for AI tools: https://www.hcilab.org/ai-tools-directory/

- We acknowledge that the list below is mainly based on two posts from Sowmiya Rani Ph.D (linkedin post 1, linkedin post 2). The posts were re-structured to merge them + map them to our research stages.

Common Tasks Between Stages

💡 Common 1: Brainstorming Ideas and structures

✅ Ayoa https://www.ayoa.com/ai/

✅ JotBot https://myjotbot.com/

✅ Paperpal Copilot https://paperpal.com/

✅ Hyperwrite https://lnkd.in/gAhVix6P

🎯Perplexity.ai: Generate research ideas & more

💡Common 2: Summarize, make concise, reduce word count

✅ UPDF https://updf.com/updf-ai/

✅ Semrush https://lnkd.in/gKQFmqsx

✅ Popai https://www.popai.pro/

✅ Writesonic https://writesonic.com/

✅ Quillbot https://quillbot.com/

🎯Wordtune: Paraphrase like a pro

🎯Yoodli.ai: Improve your communication

💡Common 3: Image creation tools

✅ Canva magic studio https://lnkd.in/gz7C8R-x

✅ Mind the graph https://mindthegraph.com/

✅ Biorender https://lnkd.in/g-RGpSvk

✅ Adobe firefly https://lnkd.in/ggArPjqE

✅ Imagine https://www.imagine.art/

✅ Inkscape https://inkscape.org/ Free vector drawing tool

💡Common 4: Joker (multipurpose) tools

🎯OpenAI: Source of ChatGPT and more

Stage 1: Research Planning

-

- Use common 1: Brainstorming Ideas and Structures

- Use common 4: joker multipurpose tools

- 🎯Audemic.io: Listen to your PDF papers

- 🎯Kubiya.ai: Automate your entire workflow

- 🎯Notion.ai: Your personal manager

- 🎯Trello: Contribute and collaborate efficiently

- 🎯Quillbot: Quintessential research assistant

- 🎯Xmind: Mindmapping tool

💡 Finding relevant literature

🎯Endnote: A robust reference manager

🎯Litmaps: Literature mapped

🎯Mendeley: A popular reference manager

🎯Zotero: My personal fav reference manager

🎯Research rabbit: Literature search made simple

🎯Scite.ai: Literature through citation statements

Stage 2: Prototyping

-

- Use common 3: Image creation tools

- Use common 4: joker multipurpose tools

- 🎯Boomy: Make your own BGMs for talks (music)

Stage 3: Data Collection

-

- Use common 3: Image creation tools

- Use common 4: joker multipurpose tools

- 🎯Herohunt.ai: AI recruitment engine

Stage 4: Analysis and Synthesis

-

- Use common 2: Summarize, make concise, reduce word count

- Use common 3: Image creation tools

- Use common 4: joker multipurpose tools

- 🎯Consensus: Accurate answers with citations

- 🎯Fliki.ai: Text to audio conversion tool

Stage 5: Dissemination and Communication

-

- Use common 1: Brainstorming Ideas and Structures

- Use common 2: Summarize, make concise, reduce word count

- Use common 3: Image creation tools

- 🎯Jasper.ai: Generate, translate, Co-write text

💡 Creating a roadmap of the chapter content

✅ Edrawmind https://lnkd.in/gY-En2zs

✅Whimsical https://lnkd.in/g6Bw4ARh

✅ Gitmind https://gitmind.com/

✅ Taskade https://lnkd.in/gKZGWNZr

✅ MyMap https://lnkd.in/gUvJNssk

💡Grammar and language check tools

✅ Paperpal https://paperpal.com/

✅ Trinka https://www.trinka.ai/

✅ WordTune https://www.wordtune.com/

✅ Writefull https://www.writefull.com/

✅ WordVice https://wordvice.ai/

✅ Grammarly https://www.grammarly.com/

💡 Creating your presentation

🎯Decktopus: Presentation decks in minutes

🎯Boomy: Make your own BGMs for talks (music)

🎯Unscreen.ai: Remove background from videos

🎯Vrew.ai: Generate captions for videos